Something strange is happening across the internet and most people can feel it even if they cannot fully explain it. Open X (formerly Twitter), scroll through Facebook, swipe on Instagram, or even browse short video platforms and you will eventually hit a moment where you pause and ask a simple question. Is this real or is this generated

That moment of doubt is becoming more common than ever before. Videos that look like breaking news, emotional human stories, shocking events, or unbelievable stunts are circulating everywhere. Many of them are not real recordings of life. They are AI generated content made to imitate reality so well that the human eye can no longer reliably separate truth from fabrication.

We are entering a new phase of the internet where seeing is no longer believing.

The Explosion of Synthetic Content

A few years ago, fake content online usually meant edited photos or misleading captions. Now it has evolved into something far more advanced. Artificial intelligence tools can generate entire video scenes with realistic voices, facial expressions, backgrounds, and even emotional tone.

What used to take a production team weeks can now be done in minutes by a single person using AI tools. This includes fake interviews, fake news clips, fake disaster footage, and even fake personal stories designed to go viral.

Platforms like X dot com, Facebook, and Instagram are built to reward engagement. The more people watch, react, and share, the more the algorithm pushes content further. This creates the perfect environment for AI generated videos to thrive because they are often designed specifically to capture attention quickly.

Shock, emotion, and curiosity are the fuel. AI content now delivers all three on demand.

Why AI Videos Spread So Fast

The question is not just why AI generated content exists but why it spreads so quickly. The answer lies in human psychology combined with platform design.

People are naturally drawn to unusual or emotional content. If a video looks surprising or unbelievable, users are more likely to stop scrolling. That pause is enough for algorithms to register engagement.

Once the system detects engagement, it pushes the content to more users. This creates a chain reaction where even completely fake videos can reach millions of viewers within hours.

On platforms like Facebook and Instagram, sharing behavior from friends or family adds another layer of trust. If someone you know shares a video, your brain is more likely to assume it is real. This social trust system was designed for human content, not synthetic media.

Now it is being exploited by AI generated storytelling.

The Blurring Line Between Real and Fake

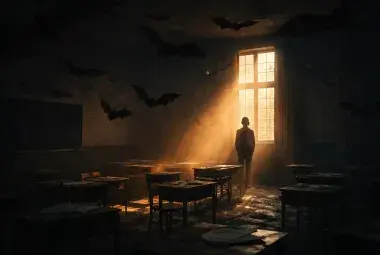

One of the biggest challenges today is that AI generated videos are no longer obviously fake. Early deepfakes had visual glitches, unnatural blinking, or robotic voices. But modern tools have reduced these flaws dramatically.

Faces move naturally. Lighting behaves correctly. Voices carry emotion and hesitation. Background noise sounds authentic. The result is content that feels real enough to pass casual viewing without suspicion.

Image

Here’s your side-by-side comparison — the real-world street scene next to its AI-generated counterpart. Both look strikingly similar, with only subtle differences in lighting and pedestrian placement. It’s fascinating how closely the AI version mirrors the real photo.

This creates a dangerous situation where truth is no longer visually guaranteed. The human brain relies heavily on visual confirmation. When that confirmation becomes unreliable, everything becomes questionable.

Even real events can now be dismissed as fake. And fake events can be accepted as real.

Why People Are Creating Fake AI Videos

It is easy to assume that AI generated misinformation is always malicious, but the reality is more complex. There are several motivations behind it.

Some creators are chasing attention and viral fame. Social platforms reward high engagement with visibility, which can translate into income or influence. AI tools allow them to produce large volumes of content quickly without needing real footage.

Others use AI content for storytelling experiments, satire, or entertainment. In some cases, viewers know it is fake and still enjoy it as creative fiction.

However, a growing portion of AI generated videos are designed to mislead. These include fake news clips, fabricated tragedies, or manipulated political content intended to shape opinions or provoke emotional reactions.

This mix of harmless creativity and intentional deception makes the internet harder to regulate and harder to trust.

The Role of X dot com, Facebook, and Instagram

Each major platform contributes to this environment in different ways.

X dot com has become a fast moving information stream where posts and videos spread rapidly with minimal friction. The speed of sharing often outpaces verification.

Facebook remains one of the largest distribution networks for video content, especially through groups and sharing chains where misinformation can travel through trusted social circles.

Instagram focuses heavily on visual storytelling and short videos, where emotional impact matters more than context or sourcing.

Image

Together, these platforms form a global distribution system where AI generated videos can move across audiences without meaningful resistance.

The issue is not that these platforms intentionally promote fake content. The issue is that their design prioritizes engagement over verification.

The Collapse of Instant Trust Online

In the past, people trusted what they saw online because it was assumed that most content came from real cameras capturing real events. That assumption is no longer safe.

Now every piece of content carries uncertainty. Even authentic videos can be questioned. This leads to a deeper problem known as trust erosion.

When people cannot distinguish between real and fake, they begin to doubt everything. Over time, this can create emotional fatigue. Instead of verifying information, many users simply disengage or believe only what aligns with their existing views.

This is one of the most dangerous side effects of AI generated media. It does not just create fake content. It weakens the foundation of shared reality.

Why Society Seems to Accept It Anyway

It may feel like people do not care whether content is real anymore, but the situation is more complicated.

Many users are overwhelmed. The volume of content is too large and too fast to verify individually. As a result, people rely on shortcuts like emotion, popularity, or personal bias.

If a video feels believable, it is accepted. If it feels entertaining, it is shared. If it confirms a belief, it is trusted.

There is also a growing culture of entertainment over information. Many users engage with content not to understand reality but to experience reactions. Shock, humor, anger, and curiosity often matter more than accuracy.

This shift does not mean society has abandoned truth entirely. It means truth now competes with entertainment on equal footing.

The Psychological Impact of Living in Synthetic Reality

Constant exposure to AI generated content has subtle but real psychological effects. One of them is skepticism fatigue. When everything looks questionable, the brain stops trying to evaluate everything carefully.

Another effect is emotional desensitization. When shocking content becomes common, it loses impact. This can push creators to generate even more extreme content to capture attention.

There is also confusion overload. People may find themselves repeatedly asking whether something is real, even when it is not important. This creates mental strain and reduces confidence in personal judgment.

Image

Over time, this environment changes how people relate to information itself.

Can We Still Trust Anything Online

The answer is not a simple yes or no. Instead, trust is becoming layered.

Some sources remain highly reliable, especially verified institutions, direct recordings from trusted journalists, and original footage with clear provenance. But even these can be misinterpreted when taken out of context.

The future of trust will likely depend on verification systems rather than visual intuition. Watermarking AI generated content, using authentication signatures for real footage, and improving platform labeling systems may become necessary.

However, no system is perfect. Technology evolves quickly, and so do methods of manipulation.

The responsibility will increasingly fall on both platforms and users.

What This Means for the Future of the Internet

The internet is shifting from an information space into a simulation space where reality and artificial creation coexist seamlessly. This does not mean truth disappears, but it becomes harder to access without effort.

We may eventually reach a point where most users assume content is synthetic until proven otherwise. That would be a reversal of how the internet originally worked.

In such a world, credibility becomes more valuable than virality. Sources will matter more than visuals. Context will matter more than clips.

But the transition period we are in now is messy. It is filled with uncertainty, experimentation, and rapid change.

Finding Ground in a Noisy Digital World

Even in this chaotic environment, there are ways to stay grounded. Slowing down consumption helps. Checking multiple sources helps. Understanding how AI generated media works helps reduce confusion.

But perhaps the most important step is awareness. Simply recognizing that not everything online is what it appears to be already changes how we interpret content.

The internet is no longer a mirror of reality. It is a mix of reality, interpretation, and artificial creation layered together.

[IMAGE 5: Calm sunrise over a city skyline with transparent digital overlays fading away into the sky symbolizing clarity returning]

Alt text: Hopeful view of clarity returning in digital information age

As users, we are now participants in shaping what is believed and what is ignored. Every share, every comment, and every pause contributes to the direction of this new digital ecosystem.

Final Reflection

What is happening to X dot com, Facebook, Instagram, and so many social platforms is not just a technological shift. It is a cultural transformation. AI generated videos are not simply changing content. They are changing perception.

We are learning, sometimes painfully, that truth online is no longer automatic. It must be examined, verified, and sometimes questioned even when it feels obvious.

The internet is not dying. It is evolving into something more complex, more powerful, and more uncertain.

And in that uncertainty, the challenge for all of us is not just to consume information, but to learn how to recognize what is real in a world where reality itself can be generated.